☆ Yσɠƚԋσʂ ☆

- 1.64K Posts

- 1.33K Comments

For sure, they’ve probably dropped more significant papers in the past year than any other groups. It does seem like the mindset in China is very different overall though. In the states, it’s basically a cult at this point where they’re trying to build a god with AGI. In China, it’s just treated like another tool for automation and companies see it as common infrastructure, akin to Linux, that people will build interesting things on. Hence why pretty much all the models in China re developed on open basis. Everybody there seems to realize that there’s no real path towards monetizing the models themselves.

It looks like you can run a low quant version on a 125gb machine, and apparently performance is still really good. https://github.com/makepad/llama_antirez_deepseek

English

English- •

- huggingface.co

- •

- 2d

- •

It’s entirely possible we might see fairly capable models that can be run with 16 gigs of RAM in the near future. Qwen 3.5 came out in February, and you needed a server with hundreds of gigs of memory to run a 397bln param model. Fast forward to a couple of weeks ago and 3.6 comes out with a 27bln param version beating the old 397bln param one in every way. Just stop and think about how phenomenal that is https://qwen.ai/blog?id=qwen3.6-27b

So, it’s entirely possible people will find ways to optimize this stuff even further this year or the next, and we’ll get an even smaller model that’s more capable.

Mainly data sovereignty. Running a local model means all your data stays on your machine. Any time you use a service you’re sending whatever the model is working on to the company. Another advantage is the price. With services you have to pay a subscription, with local models you get to run them for the price of electricity.

16gb is a bit low unfortunately. You could run a 2 bit quant of latest Qwen, but that’s going to be a severely degraded performance. https://huggingface.co/unsloth/Qwen3.6-35B-A3B-GGUF

Might be worth trying though to see if it does what you need.

the article literally links to how BYD is putting this stuff into production https://interestingengineering.com/energy/catl-ev-battery-7-minute-charge

English

English- •

- interestingengineering.com

- •

- 6d

- •

English

English- •

- github.com

- •

- 6d

- •

it seems like the whole drama didn’t really impact usage of their models by government agencies though

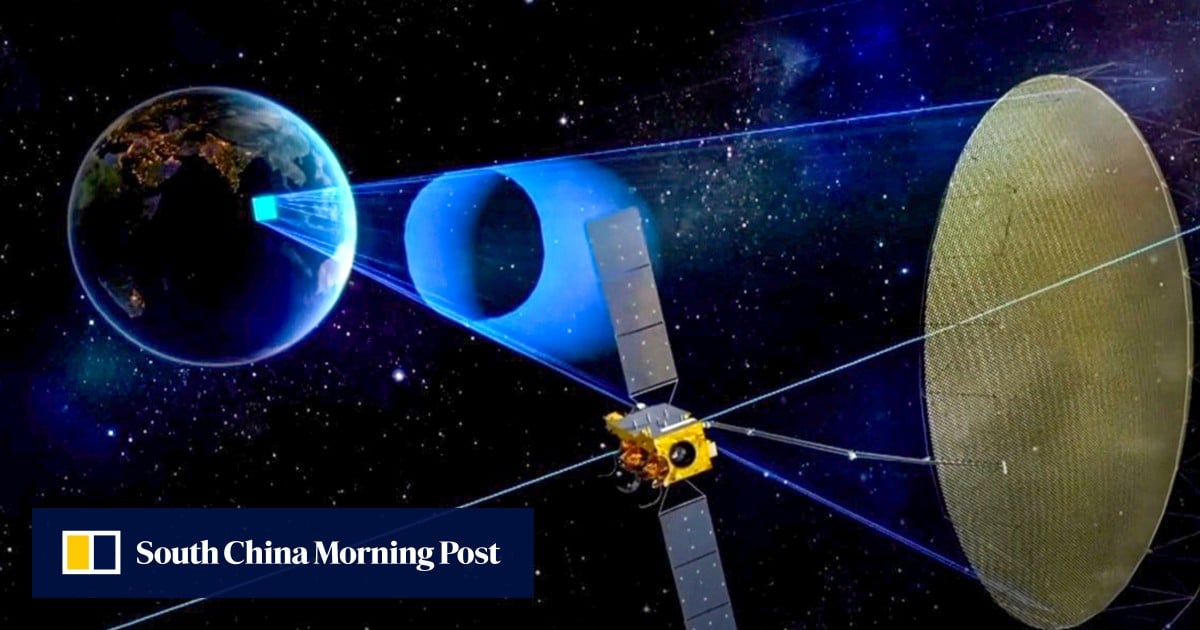

There’s plenty to understand. Synthetic apertures allow getting a far higher resolution than you could otherwise which mean that you can place satellites in higher orbit to get the same coverage you’d get with more satellites in a lower orbit. This is what the article says, but you clearly have no clue regrading the subject and just need to argue for the sake of arguing. Go touch some grass.

English

English- •

- www.scmp.com

- •

- 10d

- •

English

English- •

- www.reuters.com

- •

- 10d

- •

English

English- •

- www.scmp.com

- •

- 13d

- •

Ground effect vehicles are basically airplanes that are forced to fly really, really low. They take off from water and cruise just a few meters above the surface. At that altitude, the air gets compressed between the wing and the ground or water, which creates a huge cushion of extra lift. This lets the vehicle carry way more weight than a normal plane of the same size and power, making it incredibly efficient for hauling cargo over water. The trick is that it only works over flat surfaces like oceans or lakes, and the piloting can be tricky because you’re skimming the waves at high speed without actually being able to climb to a higher altitude. It’s a neat piece of engineering that trades operational flexibility for raw lifting power.

LMFAO